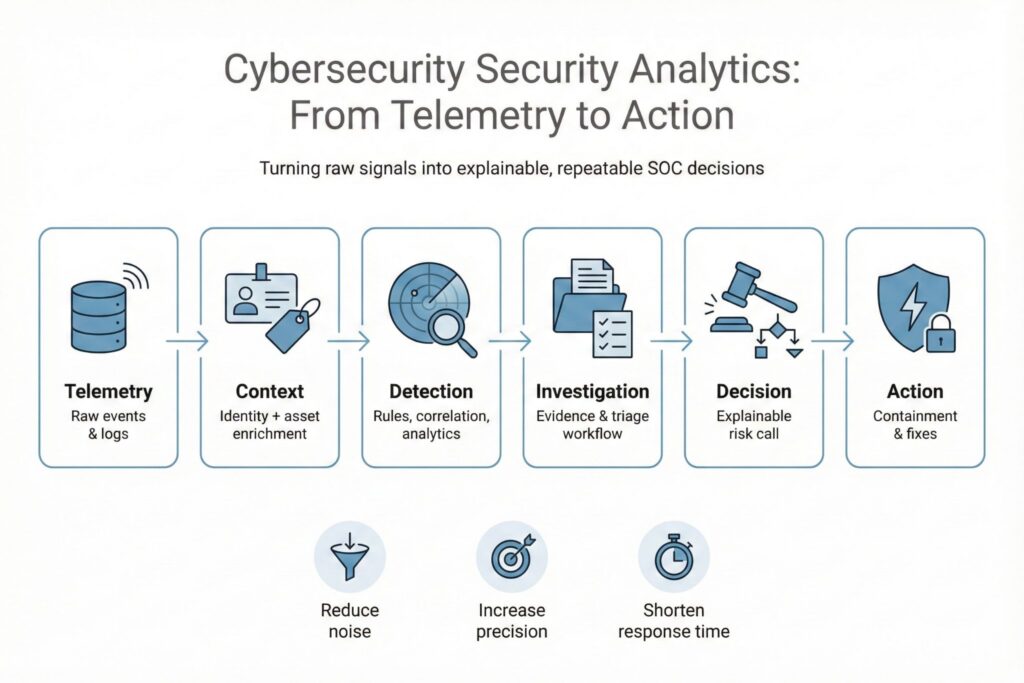

Security analytics is how a security team turns raw cybersecurity telemetry into decisions it can explain, repeat, and improve. Done well, it reduces time wasted on noise and increases the share of alerts that lead to clear actions.

What Cybersecurity Security Analytics Means

Security analytics is not a single product category. It is a set of practices that connect four things that often live in separate places: security data, context about your environment, detection logic, and investigation workflows.

Log storage answers whether an event happened and preserves evidence. Security analytics answers what the event means for cyber risk and what to do next. The difference shows up in the output. A log platform returns records. A security analytics program returns decisions such as isolate a host, revoke tokens, disable a risky feature, or close the alert with a documented reason.

A useful mental model is decision quality. If your team cannot explain why an alert fired, what data it relied on, and what would make it false, the alert is not ready for operations. Security analytics is the discipline of making that explanation possible for cybersecurity operations.

Inputs You Can Trust

More data is not the goal. The goal is the minimum set of signals that lets you answer high-value cyber questions with confidence. Start by mapping the threats you care about to what you can actually observe in your environment. Then prove that the data is complete enough to support a decision.

A practical baseline for many organizations includes:

- Identity and access events such as sign-ins, token issuance, privilege changes, and policy decisions

- Endpoint telemetry that shows process execution, command lines, persistence behavior, and security agent verdicts

- Network and cloud telemetry such as DNS, proxy, firewall logs, and cloud control plane activity

- Asset context such as ownership, criticality, and exposure so the SOC can prioritize quickly

Multi-tenant SaaS environments often limit packet visibility, so identity and cloud control plane logs become central. For OT and ICS, active scanning and agent deployment can break fragile systems or violate change control, so passive monitoring and tight baselines tend to be safer. In regulated sectors, data retention and access controls matter as much as detection quality. If analysts cannot access the evidence during an incident, the best analytics in the world does not help.

The table below is a simple way to keep ingestion disciplined.

| Data area | What it helps you decide | Common failure that breaks analytics |

|---|---|---|

| Identity | Is this the right user and device | Missing sign-in details or inconsistent user identifiers |

| Endpoint | Did code execute and persist | No command-line capture or short retention windows |

| Network and cloud | Did access happen and where from | NAT and proxy gaps that remove source context |

| Asset context | Is this important right now | Stale inventories and unknown ownership |

Before building detections, establish a small set of data health checks. You want to know whether critical logs are arriving, whether key fields are present, and whether timestamps are usable. Without that, you will spend your time tuning alerts that are failing because the inputs are broken.

Detections That Stand Up In Operations

Security analytics uses different analytic approaches. The right mix depends on your visibility, your tolerance for false positives, and how much explanation your organization requires.

| Approach | Best used for | Where it disappoints |

|---|---|---|

| Rule and correlation | Known attacker patterns and policy violations | Poor results when context is missing |

| Behavior baselines | Account misuse and lateral movement signals | Baselines drift during organizational change |

| Anomaly and ML techniques | High-volume telemetry where manual rules do not scale | Hard to validate and hard to explain |

| Curated detection content | Fast coverage for common techniques | Becomes stale unless maintained |

Regardless of approach, every detection should ship with a short operational contract. If a detection cannot be tested on real data, it will either spam the SOC or miss what it was built to catch. Use a detection card so the SOC and engineering share the same expectations:

- Goal and scope, including what it does not detect

- Required data sources and critical fields

- Assumptions and expected normal behavior

- Benign lookalikes, including business processes that mimic the signal

- Validation steps using known-good and known-bad examples

- Triage steps that an analyst can complete quickly

- Response actions and who owns them

- Failure modes such as missing logs, clock skew, or identity gaps

This is where security analytics becomes a people-first capability. Analysts get fewer ambiguous alerts. Engineers get precise feedback when a detection fails. Leaders get evidence they can use to justify changes that reduce cyber risk.

How Cybersecurity Analytics Runs In a SOC

Security analytics succeeds when the path from alert to decision is short and predictable. That requires ownership. Detection engineering typically owns data onboarding and detection content. The SOC owns triage and investigation workflows. Platform, identity, and cloud teams own many of the controls and logging settings that make analytics possible.

Mini-scenario

An analyst sees an alert for a successful sign-in from a new geography, followed by a rapid change in application consent permissions for the same account. The analyst correlates identity events with cloud audit logs and checks endpoint telemetry for the user’s primary device. There is no recent device enrollment or trusted device signal for that account, and the consent action grants broad mailbox access.

Decision and action

The analyst treats the activity as likely token-based abuse. They revoke active sessions, disable user-driven consent for the tenant group that contains the user, and block the newly authorized application. They open a ticket for the identity team to review conditional access policy gaps and to confirm whether the user was traveling.

Outcome and learning

The post-incident review finds that the alert lacked one field that would have made the call faster, the identity risk score from the provider. Engineering adds that enrichment and updates the detection card with two common benign lookalikes, legitimate travel and approved third-party integration onboarding. The SOC also adds a fast triage query that checks for recent password reset, MFA method changes, and new device registration in one view.

In small teams, the same person may wear multiple hats. The key is still separation of concerns. Even a one-person SOC benefits from a clear boundary between building detections and responding to alerts, because it forces documentation and reduces fragile tribal knowledge.

Measuring And Improving Security Analytics

Counting alerts and dashboards is not measurement. Measurement is whether analytics improves cyber outcomes and whether it does so with predictable effort. You want a handful of metrics that connect inputs, process quality, and results.

Use metrics that are precise enough to calculate the same way every month:

- Time to detect and time to respond for incidents where analytics provided the first actionable signal

- Alert precision, defined as the share of alerts that become confirmed incidents or validated suspicious activity after triage

- Data freshness and completeness for priority sources, including ingestion delays and missing critical fields

- Coverage mapping, where each high-priority threat technique has at least one detection or an explicitly accepted gap

Do not treat these numbers as a scoreboard for analysts. They are control signals for the program. If precision drops, inspect what changed. It might be a new business workflow, an identity policy change, or a missing log field after an upgrade. If time to detect improves but time to respond does not, the bottleneck may be access to response tools or unclear ownership.

Frequently Asked Questions (FAQ)

Cyber security analytics is the practice of turning security data into decisions you can defend and repeat. A SIEM is mainly the log platform (collect, store, search); analytics is what adds context, detection logic, and an operational path to action.

Start with identity (sign-ins, token events, privilege changes) and endpoint execution data (processes and command lines). Add core network/cloud visibility (DNS, proxy/firewall, cloud control plane logs) and basic asset context like owner and criticality—without that, prioritization breaks.

Two metrics carry most of the signal: time to detect and time to respond for incidents where analytics provided the first actionable lead, and alert precision (how many alerts become confirmed incidents or validated suspicious activity after triage). Pair them with a simple data-quality view (missing fields, ingestion delay) so you can explain why performance moved up or down.

The SOC owns triage, investigation, and the decision to escalate or contain. Detection engineering owns onboarding standards, detection content, testing, and the documentation that makes alerts operable. Platform, identity, and cloud teams own the controls and logging settings that make the data trustworthy, and they usually execute the durable fixes analytics points to.

Leave a Reply