Penetration Testing is a permission-based cyber security assessment that tries to reach a real business impact the same way an attacker would, then leaves behind evidence your team can use to fix and verify the fix. The key difference from many other cybersecurity activities is the standard of proof. A good test does not stop at a potential weakness. It shows a working path, documents the conditions that made it possible, and explains what closes the path without breaking production.

This matters because most organizations do not suffer from a lack of findings. They suffer from uncertainty about which weaknesses combine into an incident, which controls actually stop an intruder, and what should be fixed first when engineering time is limited. A well-run engagement reduces that uncertainty.

Evidence Standards In Penetration Testing

Penetration Testing is not defined by a toolset, a checklist, or the number of issues found. It is defined by validated impact under agreed rules of engagement. Impact can mean an account takeover, access to regulated data, an unauthorized change in the cloud control plane, or lateral movement from a low-trust segment into a high-trust one. The work is successful when it replaces assumptions with evidence that holds up in an audit, a post-incident review, and an engineering triage.

In practical terms, a penetration test answers questions executives and security leaders actually ask.

- Could an attacker chain these conditions in our environment

- What is the first reliable foothold

- What control fails first and what control slows the attacker down

- What single fix breaks the chain with the least operational risk

A short example shows the difference between theory and proof. A tester finds an internet-exposed administrative endpoint that was intended to be reachable only through a VPN. During validation they confirm the exposure is consistent and not a temporary misrouting. They then identify an authorization flaw in an API that allows role changes for a subset of accounts. The tester demonstrates the smallest possible proof of impact, such as access to a single customer record identifier through a test account, and stops before unnecessary data access. The report ties the issue to a specific control failure, proposes a targeted fix, and defines exactly how to retest. Engineering ships the fix, and the retest confirms the chain is closed.

Scoping Penetration Testing Around Risk

Scope is the hidden determinant of whether Penetration Testing produces value or friction. A scope that is too broad pushes the team toward shallow coverage or risky shortcuts. A scope that is too narrow can miss the routes attackers actually use, especially routes that start with identity and configuration rather than a classic software vulnerability.

A productive scoping conversation aligns three things. The first is the business outcome you want to prevent. The second is what can be tested safely without harming operations. The third is the level of access you want to simulate, such as no access, a standard user, or an assumed compromised workstation.

Use scoping questions that force decisions.

- Which user journey, dataset, or system boundary matters most

- Which identities matter most including admins, support roles, service accounts, and CI tokens

- What is unacceptable impact including performance degradation, lockouts, or touching production data

- Which logs and alerts will defenders review during the test and which evidence will be required for retest

Different environments demand different constraints. Multi-tenant SaaS needs strict rules around test tenants, isolation checks, and rate limiting so testing does not become a customer impact event. Cloud-heavy organizations need explicit agreement about which IAM actions are allowed, how temporary credentials will be handled, and what changes must be rolled back. This level of rigor is essential because the “Verizon Data Breach Investigations Report” and similar 2025–2026 industry data identify identity-based attacks and cloud misconfigurations as the primary drivers of modern breaches. OT and ICS environments require a safety-first stance where availability and physical process integrity can outweigh the value of aggressive exploitation.

The table below is a practical way to select a test focus based on risk and the output you need.

| Penetration Testing Focus | Best Fit In Cybersecurity Programs | Typical Starting Access | Output That Should Be Non Negotiable |

| External perimeter | Internet exposure, credential misuse, remote management hardening | No access | Validated entry points, proof of reachable impact |

| Internal foothold | Lateral movement, privilege escalation, segmentation strength | Low-priv user or assumed compromise | Attack paths to high-value systems and identities |

| Web and API | Authorization, session handling, business logic | Test users and safe data | Reproduction steps, safe proof, engineering-ready fixes |

| Cloud control plane | IAM, storage exposure, misconfiguration chaining | Read-only plus limited write | Verified paths to data access or admin actions |

| Boundary validation | Whether network or identity boundaries actually hold | Known routes and constraints | Evidence of allowed paths and blocked paths |

Workflow That Keeps Testing Safe

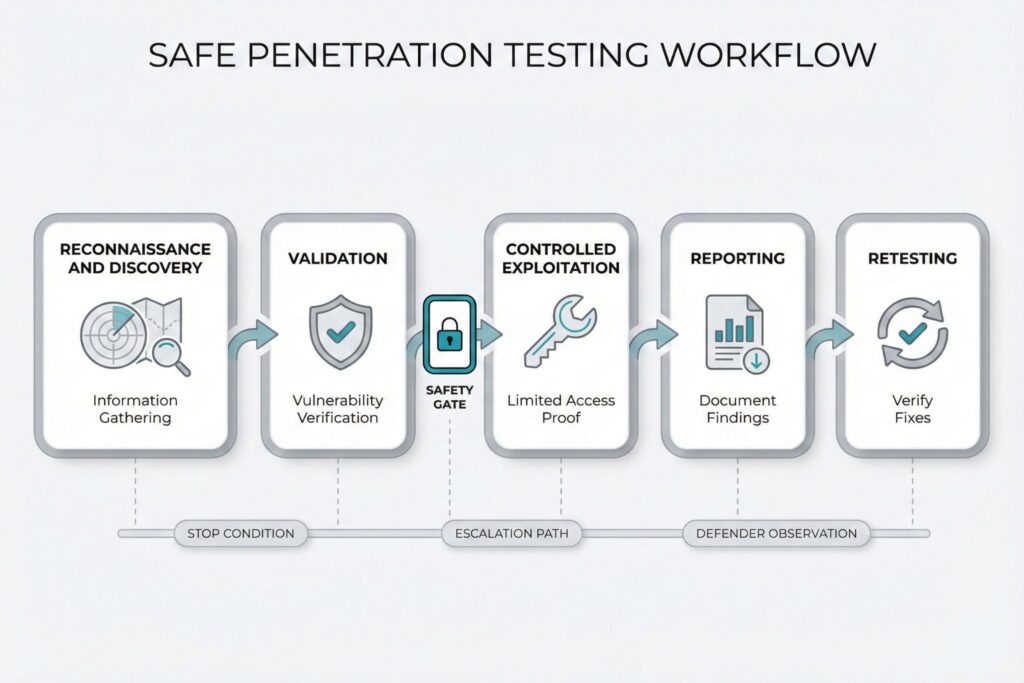

A credible Penetration Testing engagement has a workflow that looks predictable from the outside even if the technical moves differ from target to target.

Reconnaissance and discovery map what exists, what is exposed, and which identities and interfaces matter. Validation confirms a weakness is real and reachable in your environment. Controlled exploitation demonstrates impact within the agreed rules. Reporting translates the work into actions, and retesting verifies the actions closed the path.

The gap between reckless and professional work shows up in governance, not bravado. A professional tester keeps a decision log, limits blast radius, avoids destructive actions, and treats production data as toxic. Proof should be proportional. Often it is enough to show access to a single record, an object identifier, or an authorization boundary. Extracting datasets is rarely necessary and frequently unacceptable.

Operational coordination is part of cyber risk management. The engagement should have a stop condition, an escalation path, and a clear plan for when defenders will observe activity. Even when the goal is preventive cybersecurity rather than live response testing, this coordination reveals which controls work under pressure and which ones only look good in dashboards.

Choosing Between Cybersecurity Test Types

Confusion in procurement is common because the labels sound similar and many vendors blur them. The simplest way to reduce wasted spend is to match the service to the decision you need to make, ensuring the methodology aligns with established benchmarks like “NIST SP 800-115” or the “OWASP” testing guide.

| Activity | Primary Goal | What You Receive | Common Failure Mode |

| Vulnerability scanning | Fast coverage of known issues | Lists of potential weaknesses with severity estimates | Treating volume as risk reduction |

| Penetration Testing | Proof of a realistic attack path | Validated impact plus reproduction details | Buying it as a compliance checkbox |

| Red team exercise | Stress-test detection and response | Lessons about defenders, visibility, and containment | Running it before basic hygiene is stable |

Scanning is great for continuous visibility and patching discipline. Penetration Testing is the right choice when you need to know whether a specific business risk is reachable through chaining. Red teaming is the right choice when you need to measure detection, escalation, and containment under adversary pressure.

Reporting That Speeds Cyber Fixes

The report is the deliverable that determines whether the engagement improves cybersecurity or becomes expensive theater. Strong reporting links technical details to decisions, separates individual issues from attack chains, and states what was validated versus what was inferred. It also preserves enough evidence that another engineer can reproduce the issue without guesswork.

A practical report contains an executive summary, a prioritized list of validated chains, and a section engineers can act on immediately. For each chain it should include environment assumptions, affected components, exact reproduction steps, and a clear retest plan.

A useful evidence pack is compact and defensible.

- Sanitized request samples that show the failing authorization decision

- Time-aligned logs tied to test accounts and known source addresses

- Command history or tooling output only when it clarifies reproduction

- Screenshots only when they add clarity that text cannot provide

Different audiences need different slices of the same truth.

| Audience | What They Need From Penetration Testing | What Reduces Trust |

| Engineering | Reproduction steps, affected code or configuration, safe proof | Vague risk language without mechanics |

| Security leadership | Attack chain, business impact, ownership, retest criteria | Long lists with no prioritization |

| Operations | Timing, stability notes, triggered alerts, rollback steps | Advice that ignores uptime constraints |

| Compliance and audit | Scope, rules of engagement, evidence of validation | Unclear boundaries and undocumented assumptions |

Turning One Test Into A Repeatable Program

Penetration Testing creates the most cyber value when it is run as a program with feedback loops, not as an annual event. The practical goal is to shrink repeatable attack chains, shorten remediation cycles, and harden the surfaces where the organization actually gets hurt.

Start with capacity reality. If engineering can only fix a small number of issues per cycle, scope the test to surfaces where a closed chain changes risk. Make retesting a default part of the engagement rather than an optional add-on. Track a small set of metrics you can verify, such as time from validated report to accepted fix, percentage of high-impact chains closed and retested, and recurrence of the same root causes.

To keep the program modern for 2025 and 2026, bias your scope toward where attackers and incidents keep moving. That typically means identity and access, exposed management planes, APIs that power mobile and partner integrations, and cloud misconfiguration chains. If your product uses or exposes AI features, treat that surface as first-class cyber risk. Use the “OWASP Top 10 for LLM Applications” to audit the authorization model, data boundaries, prompt and tool invocation pathways, and the specific controls that prevent unintended data disclosure and unsafe actions.

Before you buy a test, ask questions that separate a real Penetration Testing service from a repackaged scan.

- What counts as validated proof and what artifacts will you deliver

- How do you minimize production data access while still proving impact

- How will you structure retest and what evidence will prove the chain is closed

When those answers are clear, Penetration Testing becomes a predictable part of cybersecurity engineering. You get fewer arguments, faster fixes, and a growing library of closed attack paths that you can prove stayed closed.

Frequently Asked Questions (FAQ)

Run it after material changes that alter attack paths, such as major releases, new authentication flows, new integrations, cloud permission model changes, or infrastructure migrations. For stable systems, many teams use an annual cadence for core surfaces and add smaller targeted tests around high-risk changes.

Require reproducible steps tied to specific assets, identities, and conditions, plus a minimal proof artifact that demonstrates impact without unnecessary data access. Ask for a retest recipe that states exactly what must fail after remediation.

Include in-scope assets and identities, allowed techniques, explicit prohibited actions, safety constraints, and stop-testing conditions.

A clean retest verifies the original attack chain no longer works using the same evidence and assumptions, without expanding scope or introducing new findings. Schedule it as soon as the fix is deployed and logs are still available, ideally in the same release window.

Prefer test tenants, synthetic data, and least-privilege test accounts that mirror real roles. If production must be used, restrict proof to the smallest possible sample, log every access, and avoid bulk queries or downloads.

A scan is enough when your goal is broad coverage of known issues and patch hygiene across many assets. A pen test is not the right tool when you need continuous monitoring, asset discovery at scale, or response readiness validation, which are better handled by scanning programs, exposure management, and red team style exercises.

Leave a Reply