Author: James Calloway, CISSP, CISM — Senior Cybersecurity Risk Advisor. More than 14 years of experience helping organizations translate cyber threats into financial decision frameworks. Specializes in risk quantification, cyber insurance modeling, and board-level risk reporting across financial services and manufacturing sectors.

What Vulnerability Assessments Actually Measure

A vulnerability assessment is a structured process of locating, classifying, and ranking security weaknesses across an organization’s IT environment. The output is a prioritized list of findings that security and operations teams can act on — patching systems, adjusting configurations, or formally accepting risk based on business context.

Penetration testing goes further: testers chain weaknesses into actual attack paths. An assessment does not do that. It maps the terrain. The question it answers is narrower and more operational — where do known weaknesses exist, and which ones carry the most risk for this specific environment?

Assessments typically cover network infrastructure, operating systems, applications, databases, and cloud configurations. Depending on scope, they may also include OT/ICS devices, IoT endpoints, or Active Directory environments. Scope must be defined before scanning begins. Undefined scope produces results that are difficult to prioritize and nearly impossible to act on.

How Assessments Are Conducted

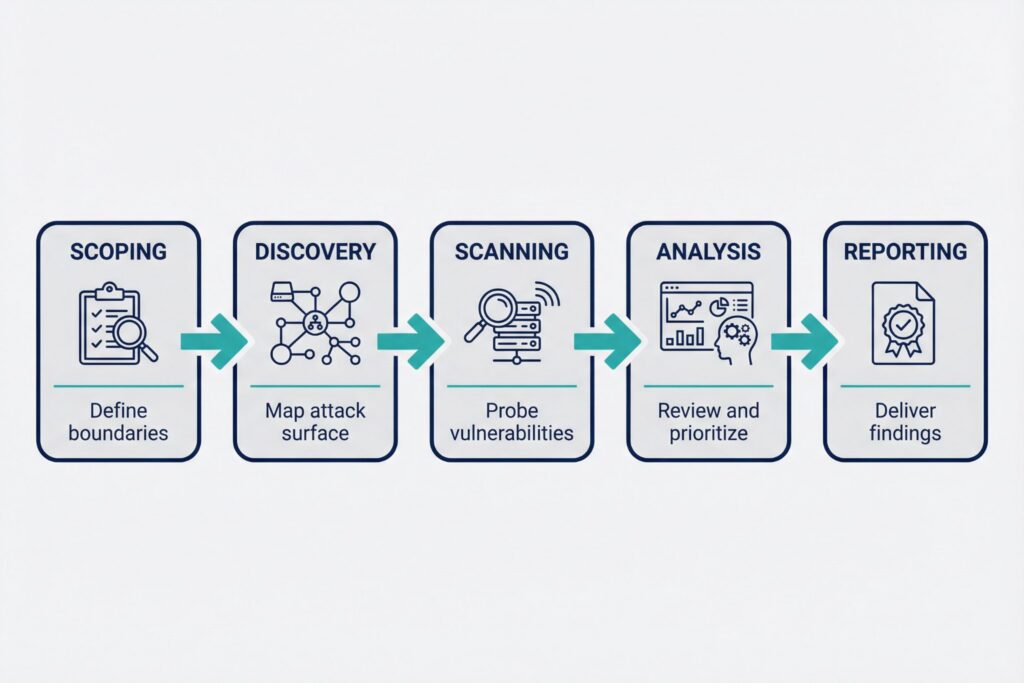

Most assessments follow a structured sequence: scoping, discovery, scanning, analysis, and reporting.

Discovery and Scanning

Discovery maps the attack surface. Scanners then probe each asset against a database of known vulnerabilities, matching software versions, configurations, and exposed services to entries in the National Vulnerability Database (NIST NVD) and vendor advisories. After automated scanning, analysts review findings for false positives, apply business context, and assign priority.

Authenticated vs. Unauthenticated Scanning

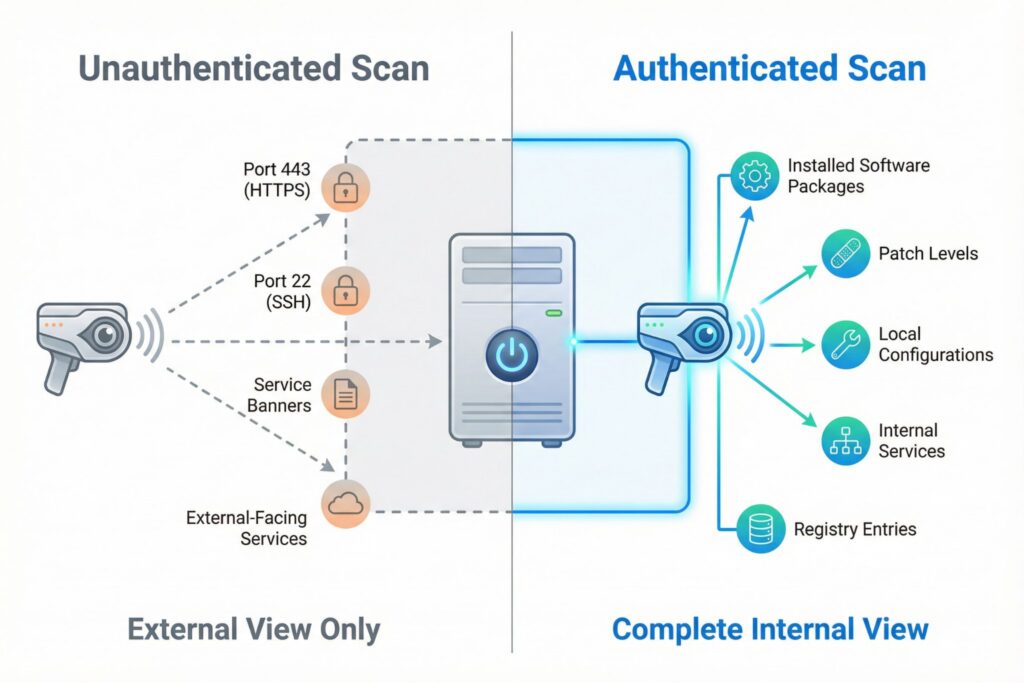

The distinction between these two scan types matters significantly. An unauthenticated scan shows what is visible from outside a system — exposed services and service banners that any network-connected host could observe. An authenticated scan logs into systems with valid credentials and inspects installed software, patch levels, and local configuration. Authenticated scans produce far more complete results, and in most internal assessments they are the right default.

For environments where authenticated scanning is not yet configured, unauthenticated results represent a minimum floor, not a complete picture. Missing patches on internal systems will not appear, and the actual risk posture is likely worse than the report suggests.

Prioritizing Vulnerability Assessment Results

A 10,000-finding report without prioritization leads to triage paralysis — teams either freeze or spend cycles on low-value items while critical exposures age. Three frameworks do the practical work here.

| Framework | What It Measures | Best Used For |

|---|---|---|

| CVSS v4.0 | Severity of a vulnerability in isolation | Baseline classification, compliance reporting |

| EPSS | Probability of exploitation in the wild | Prioritization, remediation sequencing |

| CISA KEV | Known active exploitation confirmed | Immediate-action triage |

CVSS v4.0, published by FIRST in October 2023, refines scoring granularity compared to prior versions (FIRST, CVSS v4.0 Specification, 2023). Many organizations still operate on v3.1, which remains valid but can score the same vulnerability differently. EPSS, also maintained by FIRST, assigns each CVE a probability score between 0 and 1 (FIRST, EPSS Model, 2023). In practice, findings scoring above 0.1 are generally treated as elevated exploitation risk and warrant accelerated remediation.

CISA’s Known Exploited Vulnerabilities catalog lists vulnerabilities with confirmed active exploitation (CISA). A finding in that catalog should compress remediation timelines regardless of its CVSS score.

Where Vulnerability Assessments Fit in Security

One vendor’s assessment is another’s scan — a gap that creates real confusion when scoping an engagement or comparing programs across teams. These distinctions are worth keeping precise.

A vulnerability scan is an automated tool run. An assessment includes human analysis, prioritization, and a risk-contextualized report. A penetration test attempts to chain vulnerabilities into actual exploitation paths and requires authorized testers with defined rules of engagement. A risk assessment is broader still, addressing threats, likelihood, and business impact across people, process, and technology.

An assessment is also a point-in-time activity. A vulnerability management program runs continuously — with recurring scans, defined SLAs for remediation by severity, exception processes, and metrics tracked over time. CIS Controls v8, Control 7 recommends continuous vulnerability management with regular assessment cycles as a baseline. Organizations with rapid deployment cycles may need tighter windows or integrated scanning in CI/CD pipelines.

From Report to Remediation

Without assigned owners and deadlines, a report ages on a shared drive until the next audit asks why nothing moved. The most common reason findings stall is not technical — it is unclear ownership. Security flags the issue; nobody knows who fixes it.

Assigning Ownership

Each finding needs a responsible team — usually IT operations or a system owner — and a realistic deadline tied to severity. Critical findings in internet-facing systems warrant different urgency than medium findings on isolated internal hosts. Documenting ownership boundaries in a RACI or equivalent prevents findings from stalling between teams. Security should verify remediation through rescanning rather than accepting written confirmation alone.

A practical example: a mid-sized financial services firm runs an authenticated internal scan and discovers that three application servers are running an outdated version of a widely used component with a high-severity CVE. The component is not internet-facing, but it sits on the same network segment as customer data systems. The security team flags it as high priority based on lateral movement risk rather than CVSS score alone, assigns remediation to the application team with a 15-day deadline, and verifies the patch in the next scan cycle. The finding closes with evidence logged for audit purposes.

Metrics That Keep the Process Honest

Three numbers are worth tracking consistently.

• Mean time to remediate (MTTR) by severity tier

• Percentage of critical and high findings closed within defined SLA

• Repeat findings rate across consecutive scan cycles

Repeat findings — the same vulnerability appearing in successive assessments — are among the most useful signals. They indicate either broken remediation workflows or unmanaged asset sprawl.

Where Assessments Fall Short

For small teams without dedicated security staff, fully manual triage is rarely feasible. Prioritizing CISA KEV entries and EPSS scores above 0.1 covers the most dangerous ground without requiring deep analyst capacity.

Environment-Specific Limitations

Different infrastructure types introduce gaps that standard scanners do not cover on their own.

• Cloud environments require configuration-specific assessment tools alongside traditional scanners. Infrastructure-as-code and container workloads introduce vulnerabilities that agent-based or network scanning misses. Software Bill of Materials (SBOM) analysis is an increasingly practical complement to scanner output.

• OT and ICS environments need careful scoping — aggressive scanning against programmable logic controllers or legacy SCADA systems can cause operational disruption. Work should follow NIST SP 800-82 Rev. 3 (NIST) alongside vendor-specific guidance.

• Multi-tenant SaaS platforms present their own constraints. Organizations often cannot run scans against shared infrastructure they do not own, so assessments focus on cloud account configuration, API security, and the organization’s own workloads.

Frequently Asked Questions (FAQ)

The right cadence depends on how frequently the environment changes. For most organizations, quarterly assessments combined with continuous scanning on critical assets is a defensible baseline. Environments with rapid deployment cycles may need more frequent assessment windows or integrated scanning in CI/CD pipelines.

A security audit evaluates compliance with defined policies, standards, or regulatory requirements. A vulnerability assessment focuses specifically on technical weaknesses in systems and software. They can overlap, but audits are typically process- and control-oriented, while assessments are technically focused.

Aggressive or misconfigured scans can cause instability on fragile systems, particularly legacy hardware or OT devices. Running scans during maintenance windows, using credentialed scanning rather than brute-force probing, and following vendor scanning guidelines significantly reduces this risk.

Security teams typically own identification and prioritization. IT operations or system owners own remediation. Clear ownership boundaries, documented in a RACI or equivalent, prevent findings from stalling between teams. Security should verify remediation through rescanning rather than accepting written confirmation alone.

Free and open-source tools such as OpenVAS can produce useful results for organizations with limited budgets. The limitation is not usually scanning capability but analyst capacity — interpreting results, eliminating false positives, and managing remediation workflows requires time and skill regardless of the tool used.

Leave a Reply