Author: Sean Whitmore, GWEB, CSSLP — Application Security Engineer. 11 years of experience in secure software development lifecycle implementation, code review, and vulnerability management for web and mobile applications. Works with development teams to integrate security practices from design through deployment.

Consultant: James Harker, CISSP, vulnerability management specialist with twelve years of experience in enterprise security programs, risk-based remediation strategy, and security operations center advisory work.

Organizations handling sensitive data or critical infrastructure should consult a certified cybersecurity professional before implementing or modifying a security program.

Modern organizations face a volume of software vulnerabilities that no security team, regardless of size, can fully address. The National Vulnerability Database published over 28,900 new Common Vulnerabilities and Exposures (CVEs) in 2023 alone — a record at the time (NIST 2023). Vulnerability prioritization is the structured process of deciding which security weaknesses to fix first, based on the actual risk they pose to a specific environment rather than a severity score on paper.

That distinction matters more than it sounds. Without a clear prioritization process, teams either freeze under the volume or default to fixing whatever the scanner flagged as alarming. Neither approach improves security in any meaningful way.

Why Patch Order Changes Everything

A high severity rating on a vulnerability does not tell you how dangerous that flaw is in your environment. Consider a critical-rated weakness in an internal system with no network access and no sensitive data sitting beside a medium-rated SQL injection flaw in a customer-facing checkout application. The checkout flaw is the urgent one. Raw scores cannot make that call.

This was exactly the failure pattern behind the 2021 Kaseya VSA ransomware attack. The vulnerability that the REvil ransomware group exploited — CVE-2021-30116 — had been reported to Kaseya by Dutch security researchers months before the attack. The organization was aware of it. But patch progress was slow, in part because the business impact of that specific flaw in that specific system was never fully translated into remediation urgency. On July 2, 2021, REvil used it to push ransomware to an estimated 1,500 downstream businesses through managed service providers running Kaseya’s software. The vulnerability was not a surprise. The prioritization failure was.

How CVSS and EPSS Work Together

Effective prioritization combines technical severity with business context. The Common Vulnerability Scoring System (CVSS) provides a standardized way to rate severity from 0 to 10 across dimensions like attack complexity, required access, and potential impact. It is useful as a common language — it gets teams aligned and integrates with most scanning platforms. But CVSS does not tell you whether anyone is actively using a flaw in real attacks right now.

The Exploit Prediction Scoring System (EPSS), developed by FIRST (Forum of Incident Response and Security Teams), adds that layer. EPSS assigns a probability estimate for exploitation within the next 30 days, which makes triage decisions far more defensible (FIRST 2023). A vulnerability with a CVSS score of 9.8 and an EPSS probability of 0.03% is a different problem from one scoring 7.2 with an EPSS probability of 60%.

| CVSS | EPSS | |

|---|---|---|

| What it measures | Technical severity in a worst-case scenario | Probability of exploitation in the next 30 days |

| Score range | 0–10 (higher = more severe) | 0–100% (higher = more likely to be exploited) |

| Updated | When a CVE is published or re-evaluated | Daily, based on current threat intelligence |

| Main limitation | Does not reflect real-world attacker activity | Does not capture business impact or asset value |

| Best used for | Establishing a severity baseline; vendor communication | Active triage; deciding what to patch this week |

Used together, they answer two different questions: how bad could this get, and how likely is it to happen soon. Neither is sufficient alone.

Security teams also assess whether a vulnerability has a known exploit circulating in the wild, whether threat actors are weaponizing it in campaigns targeting their industry, and whether the affected system is exposed to untrusted networks. CISA’s Known Exploited Vulnerabilities (KEV) catalog lists flaws actively used in attacks against U.S. federal systems and has become a practical reference for private-sector organizations worldwide (CISA 2024). For teams without a mature threat intelligence program, checking a finding against the KEV list is the fastest way to identify what needs immediate attention.

Business impact belongs in the equation too. An e-commerce company will treat a SQL injection vulnerability in its payment flow differently than a software vendor running an internal wiki. The asset’s role in revenue, data sensitivity, and regulatory exposure all shape how urgently a flaw needs to be addressed.

The Limits of Scoring Frameworks

CVSS Version 4.0, released in late 2023, introduced four metric groups — Base, Threat, Environmental, and Supplemental — expanding the framework’s ability to capture real-world exploitability (FIRST 2023). The Supplemental group provides contextual metadata but does not affect the final score, a nuance practitioners sometimes miss when interpreting vendor reports.

Despite those improvements, CVSS remains a severity rating, not a risk rating. Using it as the sole prioritization input often results in teams working through a long list of high-severity findings, many of which pose little practical danger in their actual environment.

A Practical Prioritization Workflow

Understanding the principles is one thing. Knowing what to actually do each week is another. James Harker, CISSP, who has spent twelve years advising enterprise security operations teams on risk-based remediation strategy, notes that in most engagements, fewer than 20% of critical-rated findings require immediate action once asset context and active exploit data are factored in. The score tells you what looks alarming. The context tells you what is actually dangerous.

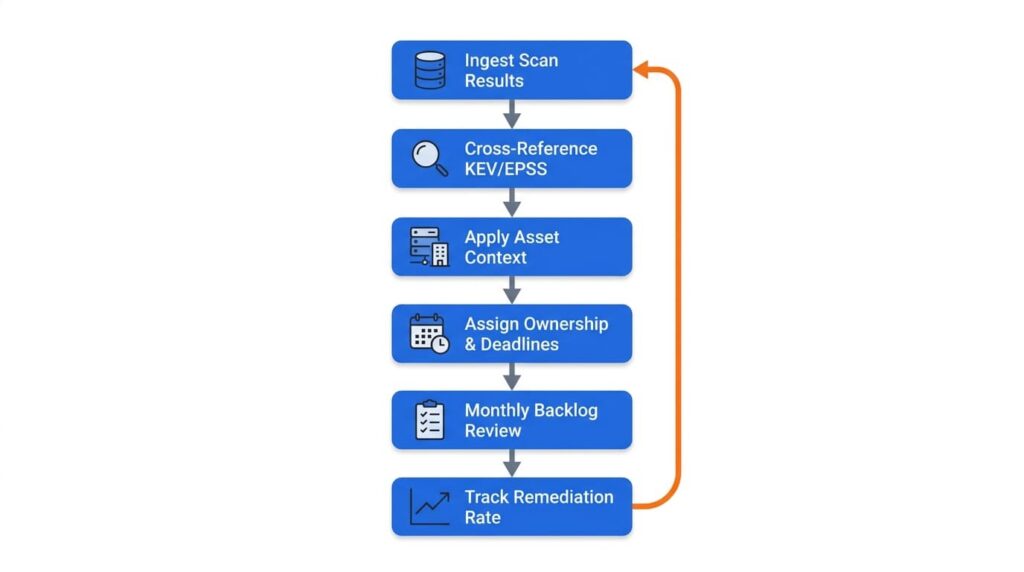

A repeatable prioritization cycle typically looks like this:

- Run or ingest scan results and normalize findings into a single tracking system — not a spreadsheet shared over email, but a tool that timestamps every finding and records its status.

- Cross-reference against CISA KEV and EPSS data the same day new results arrive. Anything appearing on the KEV list or carrying a high EPSS probability moves to the top of the queue regardless of CVSS score.

- Apply asset context — identify whether the affected system is internet-facing, handles sensitive data, or sits inside a regulated environment. A critical-rated flaw on an isolated development server is not the same problem as the same flaw on a production database.

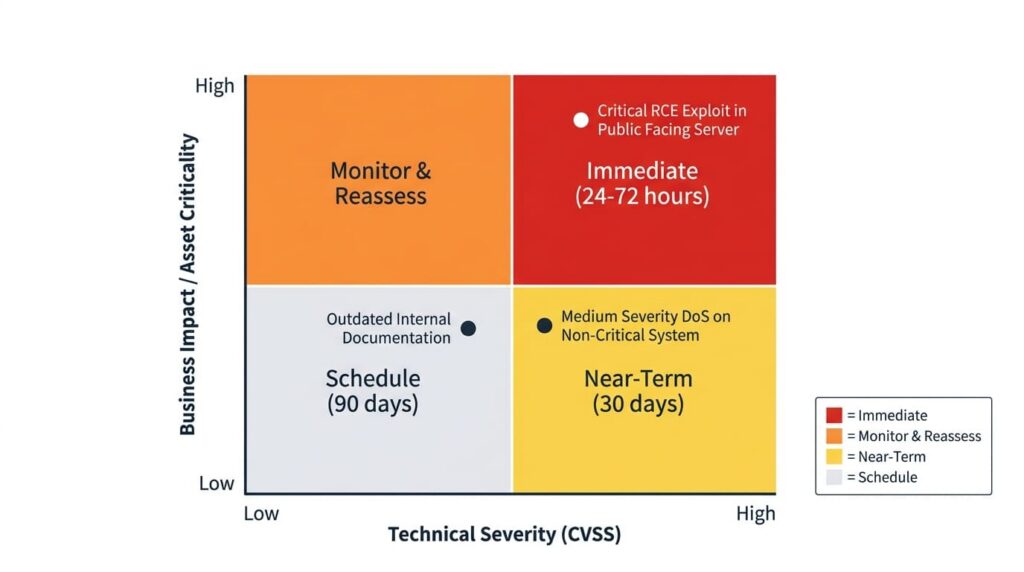

- Assign ownership and a remediation deadline by severity tier. Most teams use three tiers: immediate (24–72 hours for actively exploited flaws), near-term (within 30 days for high-severity findings without active exploitation), and scheduled (within 90 days for medium and lower findings with no known exploits).

- Review the backlog monthly and re-evaluate items that were deprioritized. The threat landscape shifts — a flaw that was low-risk in January may have a public proof-of-concept by March. Static prioritization decisions go stale.

- Track remediation rate, not just findings count. The metric that matters is mean time to remediate by severity tier. Teams that track only the number of open vulnerabilities often miss that their critical backlog is growing even as they close low-severity items.

That last point is where many programs quietly fail. Closing easy findings generates activity without improving security posture.

Where Vulnerability Programs Break Down

One persistent problem is treating a patch backlog as a linear queue ordered by score. The operational reality is that some systems can be patched in minutes while others require vendor coordination, extended change-control approval, or months of regression testing. Remediation capacity matters as much as scoring, and prioritization has to account for both.

Asset inventory gaps create a separate category of failure. Shadow IT — devices and applications running outside the IT team’s awareness — regularly appears in scan results and creates blind spots in any prioritization model. Verizon’s 2024 Data Breach Investigations Report found that exploitation of vulnerabilities as an initial access method nearly tripled year over year, with unmanaged assets contributing to a meaningful share of successful intrusions (Verizon DBIR 2024).

The Log4Shell disclosure in December 2021 exposed how widespread inventory problems actually are. CVE-2021-44228, a critical remote code execution flaw in the Apache Log4j logging library, received a CVSS score of 10.0 and was actively exploited within hours of public disclosure. For most organizations, the harder problem was not recognizing the severity — that was obvious. It was figuring out where Log4j existed in their environment. Because the library was embedded inside countless other software dependencies, many teams spent weeks just identifying which of their systems were affected before patching could begin.

Over-reliance on automated scanning without analyst review compounds these inventory failures. Scanners produce false positives, miss context-specific risk factors, and cannot weigh the downstream consequences of a flaw the way an experienced analyst can.

The Case for Outside Expertise

Organizations without a dedicated security team — or those managing large, complex environments — often benefit from engaging a managed security service provider (MSSP) or a certified vulnerability management specialist. This is particularly true when regulatory frameworks such as PCI DSS (Payment Card Industry Data Security Standard) and HIPAA (Health Insurance Portability and Accountability Act) set specific patching windows for certain vulnerability classes. Missing those windows creates legal and financial exposure beyond the technical risk.

Penetration testing is a distinct discipline from vulnerability scanning. A licensed penetration tester validates which weaknesses can actually be exploited and demonstrates realistic attack paths through a controlled, authorized engagement — not just a list of findings. This requires explicit written authorization from the asset owner and must be performed only by qualified professionals in authorized environments. Attempting penetration testing without proper authorization, even on systems you believe you own, carries serious legal consequences.

For most organizations, a structured vulnerability management program — defined ownership, regular scanning, documented prioritization criteria, and tracked remediation — provides more practical protection than any single advanced platform. Prioritization is not a one-time task. Done consistently, it directly narrows the window between a vulnerability becoming known and an attacker being able to use it.

Frequently Asked Questions

Scanning is an automated process that identifies potential weaknesses across your systems and produces a list. Penetration testing goes further: a licensed tester actively attempts to exploit those weaknesses, chains them together, and demonstrates what a real attacker could achieve in practice. Scanning can run continuously and at scale; penetration testing is a point-in-time authorized engagement that requires documented permission from the asset owner before any work begins. The two are complementary — scanning tells you what may be exposed, testing tells you what can actually be broken.

Start with asset inventory — you cannot prioritize risk for systems you do not know exist. From there, CISA’s Known Exploited Vulnerabilities catalog gives you a free, frequently updated list of flaws being actively used in real attacks, which is a defensible starting point for any triage decision. For anything involving regulated data — payment card information, health records — bring in a certified specialist before making major remediation decisions.

Apply compensating controls: restrict network access to the affected system, increase monitoring and logging around it, or disable the specific feature being targeted — then document the interim status and keep the item on your tracking list until a fix is available.

The principles are identical, but the operational challenge is inventory. Cloud environments spin resources up and down automatically, which means scan coverage must account for transient systems that may not appear in a weekly run. Shared responsibility models add another layer: cloud providers patch the underlying infrastructure, but application-layer vulnerabilities in code you deploy stay your responsibility. Without tooling that tracks ephemeral assets, even a well-run prioritization program will have blind spots.

PCI DSS sets a hard window: critical vulnerabilities must be patched within one month of release. HIPAA does not prescribe specific timelines but requires reasonable and appropriate safeguards, which regulators increasingly read as timely remediation of known risks.

Leave a Reply