Author: Sean Whitmore, GWEB, CSSLP — Application Security Engineer. 11 years of experience in secure software development lifecycle implementation, code review, and vulnerability management for web and mobile applications. Works with development teams to integrate security practices from design through deployment.

Consultant: David Hale, CISSP — application security engineer and supply chain security specialist with a background in CI/CD pipeline assessments, build integrity verification, and third-party risk management.

This article is intended for educational purposes and general security awareness. It does not constitute professional legal or compliance advice. If your organization has experienced a security incident or suspects its software supply chain has been compromised, consult a certified cybersecurity professional or incident response specialist before taking any remediation steps.

Software today is rarely built from scratch. A typical enterprise application pulls in hundreds of third-party libraries, open-source packages, build tools, and cloud services before it reaches a single user — and every one of those dependencies is a potential entry point. Not into your code directly, but into the trust relationships your systems already rely on.

A supply chain attack works by targeting something you already trust — and for most organizations, third-party software risk is where that exposure is largest and least formally managed. Rather than battering through your perimeter, an attacker compromises a library your developers use, a vendor who has persistent access to your environment, or a build pipeline with write permissions to production. The malicious code arrives through a channel your security tools have already decided is legitimate. That’s exactly what makes this category of attack so hard to catch.

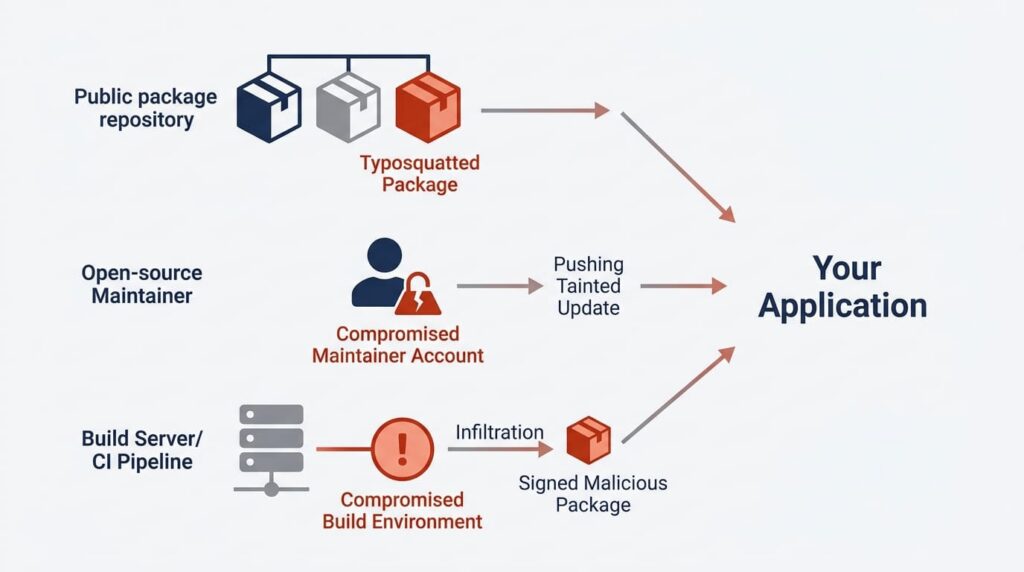

How Supply Chain Attacks Reach Your Systems

The attack surface is broader than most teams have formally mapped out. An attacker might publish a malicious package to a public repository under a name that closely resembles a legitimate one — typosquatting, it’s called. Or they might take over the account of a trusted open-source maintainer and push a backdoored update. Or find a way into a software vendor’s build infrastructure and inject code that ships to customers inside an official, digitally signed release.

That last scenario is particularly nasty to defend against. Code signing — cryptographically verifying that software came from a specific source — doesn’t protect against a compromised build environment. An attacker who controls the build can sign malicious code with a legitimate certificate. The signature checks out. The software does not.

The attack surface extends further into continuous integration and continuous delivery (CI/CD) pipelines, which often hold broad access to source code repositories, deployment credentials, and production systems. A compromise at that stage can affect every subsequent build — silently, without touching the source code developers actually review.

Three Incidents That Shifted Security Thinking

The SolarWinds compromise, disclosed in late 2020, is the most widely cited example in this category. Attackers inserted malicious code directly into the build process for SolarWinds’ Orion network monitoring platform. Tainted updates were distributed to roughly 18,000 organizations, including multiple U.S. federal agencies (CISA 2021). The malware stayed dormant for weeks before activating — by design — which made it essentially invisible to standard endpoint protection.

In 2024, a contributor to the open-source compression library XZ Utils introduced a backdoor after spending years building credibility inside the project. A Microsoft engineer named Andres Freund noticed unusual CPU behavior during routine testing and traced it back to the compromised library before it reached stable release in major Linux distributions. Had it shipped at scale, it could have opened unauthorized remote access to an enormous number of systems. What makes this case stick in the memory is the attacker’s core technique: patience. Years of it.

A third incident that gets less airtime but illustrates a different attack vector entirely is the Polyfill.io compromise in 2024. The domain for a widely used JavaScript polyfill service was acquired by a new owner, who modified the served scripts to redirect users to malicious sites. Websites loading the script from the CDN were affected with no change to their own codebases. No developer account was breached. Control of one domain was enough.

Where Exposure Concentrates in Most Organizations

Open-source dependencies

The average enterprise application depends on hundreds of open-source packages, many maintained by a small number of unpaid volunteers with limited security resources. A significant proportion of widely used packages haven’t been meaningfully updated in years (OpenSSF 2023), leaving known vulnerabilities unpatched and sitting publicly in the National Vulnerability Database. Software composition analysis — SCA — is specifically designed to identify risk in those third-party components you pull in from outside. That’s different from static application security testing (SAST), which looks for flaws in your own code, or dynamic application security testing (DAST), which probes a running application from the outside. Teams that run SAST and assume their dependency exposure is covered are wrong. It isn’t.

Build and deployment infrastructure

CI/CD pipelines present a concentration risk that’s easy to miss in routine security reviews. In supply chain assessments, it’s not uncommon to find pipeline configurations where a single job holds unrestricted write access to production deployments — no approval gate, no audit logging. One compromised credential away from everything.

Vendor access as an attack vector

Many software providers maintain persistent access to customer environments for support, updates, or telemetry. Third-party and partner involvement has remained a consistent finding in annual breach research (Verizon DBIR 2024). If those vendor accounts are compromised, attackers move directly into customer networks without touching the perimeter at all. Before granting any vendor persistent access, there are specific questions worth pressing: What credentials are used, and how are they rotated? Is access scoped to the minimum required, or does the vendor account have broader permissions than the stated use case actually needs? Is there an audit log of every action taken under those credentials? Will the vendor share results from their most recent third-party security assessment? Most reputable vendors can answer those questions without hesitation. Those who can’t are a risk worth documenting on their own.

Controls That Reduce Your Supply Chain Exposure

SCA tools — Grype and Syft are common examples — examine codebases and container images for components with known vulnerabilities, cross-referencing them against the National Vulnerability Database (NIST SP 800-161 Rev. 1, 2022). Running these scans inside a CI/CD pipeline means a vulnerable component gets flagged before it reaches production rather than after an incident has already happened.

Maintaining a software bill of materials — an SBOM, a structured inventory of every component in an application including version numbers — is increasingly required in regulated sectors. U.S. federal agencies must obtain SBOMs from software vendors under post-2021 procurement guidance (NIST 2022). The EU’s Cyber Resilience Act, which entered into force in late 2024, extends similar requirements to commercial software sold in European markets, with compliance obligations phasing in through 2027 (European Commission, Cyber Resilience Act, 2024).

Dependency pinning — locking specific package versions rather than resolving them dynamically at build time — reduces the risk of a substituted or tampered component slipping into a build. Tools like Renovate automate the review and update of pinned versions so teams don’t drift behind on security patches. But there’s a real trade-off: pinning reduces substitution risk while creating maintenance overhead. Teams that don’t actively review pinned versions can end up locked to versions with known vulnerabilities, which is its own problem.

The table below summarizes what each control protects against and where it runs out of road:

| Control | What it protects against | Where it stops |

|---|---|---|

| SCA scanning | Known vulnerabilities in dependencies | Does not detect novel or custom backdoors |

| SBOM | Inventory visibility, procurement audit | Does not prevent compromise — only records what is present |

| Dependency pinning | Package substitution during build | Requires active maintenance; stale pins accumulate risk |

| Code signing | Source verification for distributed software | Does not protect against a compromised build environment |

| Vendor access controls | Pivot through a trusted third party | Only effective if access scope and logging are enforced consistently |

No single control is enough on its own. The controls above represent software supply chain security best practices as they stand today — and their value is cumulative: an attacker who can bypass one rarely finds the next one equally easy.

Beyond What Your Team Can Assess Alone

Assessing a CI/CD pipeline for supply chain risk doesn’t fit neatly into general security administration. It requires familiarity with specific build toolchains, code signing infrastructure, and third-party access patterns that most in-house teams haven’t formally reviewed. Threat modeling a build pipeline — mapping every stage where untrusted input could influence a production artifact — is a distinct discipline from general vulnerability management or endpoint protection work.

A certified application security engineer can assess your pipeline and vendor integrations before an attacker does. For organizations handling sensitive data or operating in regulated industries, a managed security service provider with demonstrated experience in supply chain assessments is often the most practical path forward.

If an active compromise is suspected — unusual behavior following a signed update, unexpected outbound connections from a recently deployed application — isolate affected systems and contact an incident response team before attempting any remediation. Some supply chain implants are specifically designed to persist through standard removal procedures, and actions taken without guidance can make containment harder, not easier. If your organization operates in a regulated sector, review your breach notification obligations before taking any further steps.

Frequently Asked Questions

A conventional attack targets your systems directly — through phishing, brute force, or a known vulnerability in your infrastructure. A supply chain attack compromises something you already trust, so malicious code arrives through a legitimate channel. Standard defenses are not designed to scrutinize software that arrives through normal update mechanisms.

No — code signing verifies that software came from a specific source, not that the source itself was uncompromised, which is exactly what the SolarWinds incident demonstrated.

Yes, with a proportionate approach. Keeping dependencies updated, enabling two-factor authentication on developer accounts and code repositories, and running a free SCA scan on each build covers the realistic threat surface for most non-targeted organizations. Sophisticated, nation-state-level supply chain attacks tend to focus on high-value targets — but the foundational practices that reduce exposure to those attacks also protect against far more common threats.

Isolate any systems that installed the update. Do not attempt rollback or remediation without guidance from an incident response professional — some supply chain implants persist through standard removal procedures. Contact the vendor, check whether they have issued any security advisories, and if your organization operates in a regulated sector, review your breach notification obligations before taking further action.

Open-source packages can be submitted and modified by a wide range of contributors, maintainers are often unpaid volunteers, and packages can be abandoned without notice. Commercial software narrows some of those risks but introduces others — specifically, the risk that a vendor with persistent access to your environment becomes a pivot point for an attacker. Neither model is inherently safer; the controls you apply need to reflect the specific trust relationships involved.

Leave a Reply