AI can make cyber defense faster and more consistent, but only when it is tied to evidence, clear ownership, and measurable outcomes. Without those constraints, it becomes a new source of noise. The goal is not to add a clever layer on top of existing tools. The goal is to reduce risk and reduce wasted effort in sybersecurity operations.

In 2024, the global average cost of a data breach reached USD 4.88 million. In the same period, the human element remained central to incidents, appearing in 68 percent of breaches in the Verizon DBIR dataset, while ransomware was involved in about 32 percent of breaches. These numbers matter for one reason. They explain why AI projects that only improve reporting rarely move the needle. The best outcomes come from AI that strengthens identity, reduces exposure, and speeds up containment without creating unsafe automation.

Where AI Helps In Cybersecurity

Most security teams do not need more detections. They need better decisions under pressure. AI earns its place when it improves one of three things.

First, it compresses investigation time by turning messy telemetry into a coherent timeline that an analyst can verify. Second, it prioritizes work by connecting an issue to asset criticality and real-world exploit paths. Third, it improves consistency, which is how good teams avoid repeating the same mistakes across shifts.

AI tends to perform reliably in cyber when the inputs are structured and the output is easy to validate against ground truth. It also performs well when it is used as a drafting tool that produces a starting point rather than a final answer. The failure pattern is equally consistent. If a model is asked to decide whether something is true without sufficient evidence, it will often produce confident text that looks plausible. That is not malice. It is a design limitation.

AI Across The Cyber Lifecycle

AI use cases sound similar in vendor demos, yet they behave very differently in production. Treat them as separate product decisions.

Detection support includes alert clustering, deduplication, and anomaly discovery across identity, endpoint, cloud, and network sources. This is the category that most often fails through false positives and missed context. The fix is not a smarter model. The fix is stable baselines and clear scoping to high-value assets.

Exposure decisions include vulnerability and misconfiguration prioritization based on reachability, exploitability, and business impact. This is where many teams see the quickest risk reduction because it helps them stop spreading effort across low-value tickets.

Investigation acceleration includes evidence summaries, hypothesis suggestions, and guided triage steps. This can save hours per incident, but it needs strict guardrails because a wrong containment action can do more damage than the intrusion.

Communication and governance includes executive summaries, control gap narratives, and metrics. AI can improve clarity here, but it is also where false precision becomes dangerous. A clean dashboard that is not backed by auditable definitions creates a misleading sense of control.

The New Threat Model For AI In Sybersecurity

AI changes the threat landscape in two directions. Attackers use it to scale social engineering and automate parts of intrusion work. Defenders deploy AI systems that become new targets.

In 2025, two frameworks are particularly practical for teams building AI into cyber operations. NIST published updated guidance that maps adversarial machine learning attacks across goals and lifecycle stages. The UK published an AI Cyber Security Code of Practice that emphasizes secure development, integration, and operational controls for AI systems. The point is not compliance theater. The point is that AI systems have their own supply chain, their own drift problems, and their own abuse cases.

Three risks show up repeatedly in real deployments.

Data exposure happens when prompts, logs, transcripts, or embeddings capture sensitive content that was never meant to leave a protected boundary. Integrity failures happen when a model is influenced by poisoned retrieval sources, corrupted training data, or untrusted content that changes its behavior. Control failures happen when prompt injection or tool abuse pushes an assistant to take actions outside policy.

Safety Controls For AI In Cyber

The most common security failures are integration failures. The model is rarely the only moving part.

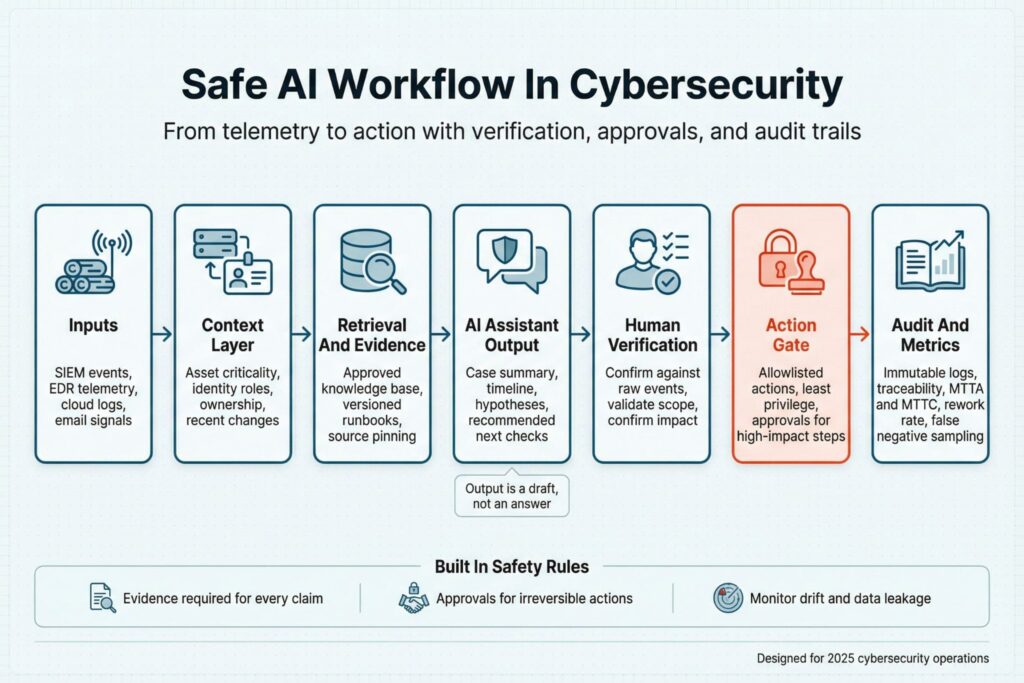

The table below captures practical controls that keep AI aligned with cyber objectives.

| AI Use In Cybersecurity | What The System Touches | Common Failure | Controls That Work In Practice |

|---|---|---|---|

| Alert triage and clustering | SIEM and EDR events, identity context | Important signals get buried or dropped | Scope to high-value assets, measure false negatives, require analyst sign-off on high-impact closures |

| Phishing analysis | Email headers, URLs, user reports | Over-trust in text quality | Keep header and link analysis deterministic, quarantine workflows, enforce takedown and user notification steps |

| Copilot with actions | Tickets, runbooks, security tool APIs | Prompt injection causes unsafe actions | Least-privilege tool access, allowlisted actions, immutable audit logs, approval gates for destructive steps |

| Threat intel summaries | External advisories and feeds | Hallucinated or stale claims | Source-bound retrieval, expiry handling, analyst verification before operational changes |

| AI system security | Training data, retrieval corpus, prompts | Poisoning and drift | Provenance tracking, drift monitoring, change control for knowledge bases, periodic red teaming |

A secure design also needs a decision rule for accountability. AI can propose. Humans approve when the action is irreversible, legally sensitive, or likely to disrupt business operations.

How To Implement AI Without Breaking Operations

A successful rollout starts with one workflow that already has decent data and a definition of done. Do not start with broad automation. Start with read-only assistance and build confidence with measurable improvements.

Use a narrow pilot where you can track outcomes, then expand only when the system is stable. The safest sequence looks like this:

- Read-only support that summarizes evidence and highlights inconsistencies in telemetry.

- Explainable scoring that ranks items while showing the internal signals behind the ranking.

- Constrained automation that drafts tickets, enriches cases, and proposes playbook steps.

- Action-taking automation only for reversible steps with monitoring and an approval path.

This sequence prevents a common failure mode where teams feel faster for two weeks, then discover they have created a hidden backlog of wrong closures, inconsistent containment, and unclear audit trails.

Metrics That Prove Cyber Value

AI should be judged like any other sybersecurity control. It either improves risk and operations, or it does not. Avoid vanity metrics such as number of summaries generated or hours saved claimed by the vendor. Track measures that connect to cyber outcomes.

A small set of metrics is usually enough, and it should be tied to the workflow you chose. You can use:

- Mean time to acknowledge and contain for incident types where AI is used

- Rework rate measured through reopened tickets and post-closure escalations

- False negative sampling results from retrospective hunts and control checks

- Data leakage indicators such as sensitive strings in prompts, logs, and exports

- Drift indicators such as rising disagreement between AI output and final analyst disposition

Keep the review cadence fixed. Weekly sampling beats quarterly dashboards because drift and prompt regressions show up quickly.

Choosing Tools That Fit Your Team

The fastest way to waste money is to buy AI that assumes perfect inventory, perfect logging, and perfect process maturity. In cyber reality, context is always incomplete. Choose systems that tolerate that reality.

Look for products and internal builds that can ingest asset owners and business criticality, show evidence for decisions, integrate through least-privilege connectors, and produce an audit trail that stands up to internal review. Avoid systems that hide scoring logic behind opaque labels and push action-taking agents before you have a stable knowledge base.

AI can make cyber defense more humane by reducing repetitive triage and by giving analysts clearer starting points. It does that only when you treat it as a controlled system with safety boundaries, measurable outcomes, and a clear chain of responsibility.

Frequently Asked Questions(FAQ)

It can cluster and deduplicate alerts, summarize evidence into a case timeline, and help prioritize exposure when it has asset context. It is most dependable when outputs can be checked against logs, tickets, and known baselines. Treat it as decision support that speeds review, not as proof of compromise.

It fails when it is asked to make high-confidence calls from incomplete telemetry, or when it reduces work by silently discarding signals. It also creates churn when it produces polished narratives that do not cite the underlying events. If analysts must re-check every claim, you have added a reporting layer, not operational value.

Track mean time to acknowledge and contain for the workflows where AI is used and measure rework through reopened tickets or post-closure escalations. Add false-negative sampling by reviewing a fixed set of closed cases each week against retrospective hunts or control checks. If disagreement between AI output and analyst final decisions rises, treat it as drift and investigate.

Only for actions that are reversible, low-impact, and already standardized in playbooks, such as enrichment, ticket drafting, or adding context to a case. Require approval for disruptive steps like account disablement, host isolation, or firewall changes. Keep an immutable audit trail of inputs, outputs, and actions for every automated step.

Start with read-only incident summarization for a narrow set of recurring cases, such as suspicious logins or cloud identity anomalies. Use a fixed template for what the summary must include and require links back to the exact events in your SIEM or EDR. Once the summaries are consistently accurate, move to prioritization and only then consider constrained automation.

Leave a Reply