Security teams frequently equate severity with risk, a miscalculation that leads to inefficient patch management and resource exhaustion. The Common Vulnerability Scoring System (CVSS) offers a standardized framework for assessing the technical severity of software flaws. However, a high CVSS score does not automatically translate to high risk for every organization. Prioritizing threats requires a clear grasp of how these scores are calculated and where the methodology falls short.

The Mechanics of CVSS Scoring

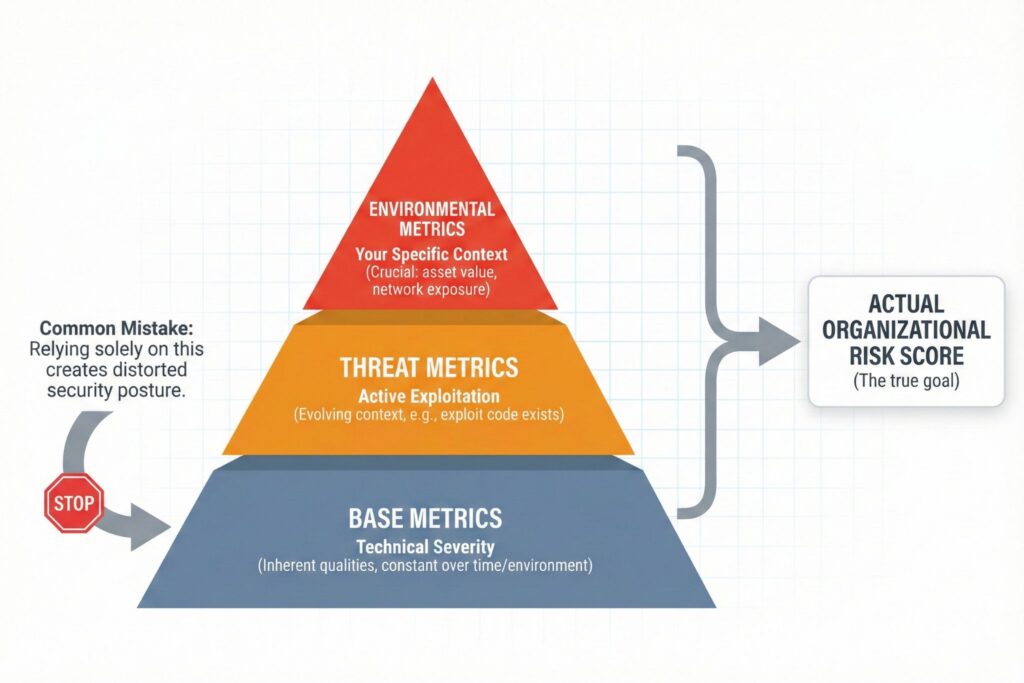

CVSS assigns a numerical value ranging from 0.0 to 10.0 based on three metric groups. An accurate score requires evaluating all three, though many organizations stop after the first.

- Base Metric Group: This reflects the inherent qualities of a vulnerability that remain constant across time and environments. It forms the foundation of the score by analyzing exploitability (the ease of hacking) and impact (the consequences of a successful hack). Key vectors include the Attack Vector (network versus physical access) and the privileges required to execute the attack.

- Threat Metric Group: Formerly known as Temporal in v3.1, this group accounts for the evolving nature of a threat. A vulnerability may initially have a low threat level, which rises if exploit code appears on the dark web or drops if a vendor releases a patch.

- Environmental Metric Group: This component allows teams to tailor the score to their specific infrastructure. It is frequently the most critical yet neglected variable. For example, a critical vulnerability on an air-gapped server (one disconnected from the internet) poses significantly less danger than the same flaw on a public-facing web server. Adjusting environmental metrics allows teams to deprioritize isolated assets in favor of exposed targets.

Analyzing the Shift from Version 3.1 to 4.0

The release of CVSS v4.0 in late 2023 addressed long-standing criticisms regarding the gap between theoretical severity and actual risk. While v3.1 prioritized theoretical severity, v4.0 emphasizes granularity and real-world threat context.

A primary adjustment was the removal of the Scope metric. This variable often confused practitioners and led to inconsistent scoring. v4.0 replaced it with Attack Requirements (AT), a metric distinct from Attack Complexity. This separation allows analysts to differentiate between exploits requiring complex engineering and those dependent on specific prerequisites, such as a race condition.

Furthermore, v4.0 integrates Threat Metrics directly into the scoring process. In v3.1, Temporal metrics were optional and frequently omitted. The new standard compels organizations to evaluate whether a vulnerability is actively exploited in the wild rather than reacting solely to a theoretical base score.

Feature Comparison: v3.1 vs. v4.0

| Feature | CVSS v3.1 | CVSS v4.0 |

| Focus | Technical Severity | Severity + Threat Context |

| Variable Metrics | Temporal (Optional) | Threat (Integral/Emphasized) |

| Complexity | Attack Complexity (AC) | AC + Attack Requirements (AT) |

| Impact Scope | Scope (S) Metric | Vulnerable vs. Subsequent System |

| User Interaction | Passive/Active combined | Granular differentiation |

The NVD Backlog and Data Reliability

The reliability of CVSS scores hinges on the National Vulnerability Database (NVD), which analyzes and scores Common Vulnerabilities and Exposures (CVEs). A processing crisis in 2024 and 2025 severely impacted this centralized model.

The volume of reported vulnerabilities has consistently outpaced the NVD’s analysis resources. By early 2025, the backlog exceeded 26,000 CVEs listed as awaiting analysis. Consequently, security teams often lack official scores for thousands of potential threats. This operational gap forces organizations to depend on vendor-supplied scores or raw data from CVE Numbering Authorities (CNAs), introducing variability in quality and potential bias.

Annual Vulnerability Volume Trends

| Year | Total Published CVEs | Avg. Daily New CVEs | Status |

| 2023 | ~29,000 | ~80 | Analyzed |

| 2024 | ~40,000+ | ~115 | Major Backlog |

| 2025 (Proj) | ~50,000+ | ~135 | Critical Delays |

Limitations in Risk Management

Basing decisions solely on CVSS Base scores results in a distorted security posture. A vulnerability rated 9.8 might exist in a software library that the application never loads into memory, making the flaw inert. Conversely, a moderate 5.5 vulnerability could be chained with a secondary flaw to grant administrative access.

Missing Business Context

CVSS creates a technical score without knowledge of the asset’s value. A high score on a server hosting a cafeteria menu represents negligible financial risk compared to a medium score on a database storing customer credit card information.

Operational Latency

A significant window exists between a vulnerability’s discovery and its official NVD scoring. Attackers utilize this delay to launch zero-day attacks while defenders wait for a score to justify the patch cycle.

Score Inflation

Vendors may inflate severity ratings to encourage rapid patching, whereas internal teams might deflate scores to satisfy compliance KPIs. Although CVSS v4.0 attempts to standardize this, subjective interpretation remains a variable.

Strategic Prioritization

Effective vulnerability management requires moving beyond the basic strategy of patching everything rated above 7.0. Teams must synthesize CVSS scores with threat intelligence and asset criticality.

Integrating the Exploit Prediction Scoring System (EPSS) alongside CVSS improves decision-making. While CVSS measures the severity of the wound, EPSS calculates the probability of the attack occurring within the next 30 days. A vulnerability with a CVSS of 9.0 but an EPSS of 0.01% generally warrants less immediate attention than a CVSS 7.0 with an EPSS of 95%.

FAQ

The primary shift in CVSS v4.0 is the removal of the confusing “Scope” metric, replacing it with “Attack Requirements” (AT). Additionally, v4.0 makes Threat Metrics integral to the scoring process rather than optional, helping organizations differentiate between theoretical severity and actual real-world threats.

CVSS measures technical severity, not business risk. A high-scoring vulnerability (e.g., 9.8) may exist on an isolated, air-gapped server or within a software library that is never loaded into memory. Without accounting for Environmental Metrics and asset value, the score does not reflect the actual danger to the organization.

As of early 2025, the National Vulnerability Database (NVD) has a backlog of over 26,000 CVEs awaiting analysis. This means security teams cannot rely solely on official NVD scores for new threats and must instead utilize vendor-supplied data or raw inputs from CVE Numbering Authorities (CNAs) to assess risks timely.

No, EPSS and CVSS should be used together. CVSS determines the severity of a vulnerability (how bad the impact is), while EPSS predicts the likelihood of it being exploited in the next 30 days. Combining them allows teams to prioritize severe vulnerabilities that are also highly likely to be attacked.

Leave a Reply