Patch management looks like routine work until the day it is not. A single unpatched edge device, an outdated VPN, or a neglected library inside a critical application can turn a small cyber incident into a business-wide outage. The uncomfortable truth in cybersecurity is that many attacks do not require creativity. They require time, exposure, and one reliably exploitable weakness.

What Patch Management Really Covers

In modern environments, patch management is not a monthly operating system update. It is a cross-team capability that spans vendor patches, configuration-level fixes, rebuilds, and managed services. If you only patch servers, you will still lose through browsers, VPN gateways, container images, and third-party components.

The table below helps teams align ownership and avoid blind spots.

| Patch Surface | What Counts As A Patch | Typical Owner | Common Failure Mode |

|---|---|---|---|

| Operating systems | Security updates, kernel fixes, agent compatibility | IT Ops | Incomplete coverage across fleets |

| Endpoints and apps | Browsers, office tools, PDF viewers, line-of-business clients | IT Ops plus endpoint team | Patch succeeds but breaks a plugin or SSO flow |

| Edge and access | VPN appliances, reverse proxies, gateways, email systems | Network and platform teams | Change windows are rare so exposure persists |

| Cloud and SaaS | Platform settings, security toggles, managed service updates | Cloud team plus service owner | Teams assume the provider patches everything |

| Containers and images | Base image rebuilds, dependency refresh, redeploy | DevOps plus application owners | Teams patch running containers instead of rebuilding |

| Firmware | BIOS, BMC, device firmware, embedded controllers | Infra team plus vendors | Devices are excluded due to downtime fear |

| Third-party libraries | Dependency upgrades, security backports | Developers | Vulnerabilities ship inside unchanged builds |

Treat scope as a contract. Every patch surface needs an owner, a fallback owner, and a way to prove state. Without that, patch management becomes reporting theater.

Why Patch Delays Increase Cyber Risk

Attackers prefer what is dependable. Known vulnerabilities are dependable because exploitation patterns are repeatable, scanning is cheap, and defenders often move slowly. The risk is not only that a flaw exists. The risk is that it stays reachable long enough for automation to find it.

Patch lag also raises incident cost. When responders cannot trust patch status, every investigation expands. Teams lose time confirming what should have been obvious, which systems are vulnerable, which versions are running, and whether a known exploit path is plausible. That delay turns containment into a guessing game.

There is another human angle that is often missed. patch management reduces the number of urgent, high-stress decisions people must make at 2 a.m. A predictable program shifts work from crisis mode to planned change. That is a direct improvement in cyber resilience.

Risk Based Prioritization That Engineers Will Accept

Severity scores are useful for sorting, but they rarely match how breaches happen. A medium-severity issue on an internet-facing service can be more dangerous than a critical issue on a segmented test box. Good patch management uses a small number of inputs that teams can validate quickly.

Use three signals and one modifier.

- Exposure and reachability in your environment

- Exploit signals that suggest real attacker interest

- Business criticality of the service and the data it touches

- Compensating controls that meaningfully reduce likelihood

If you need a simple tier model that works across most cyber programs, the table below is a good starting point. Adjust timing to your operating constraints and regulatory obligations.

| Tier | When To Use It | Examples | Target Window |

|---|---|---|---|

| Emergency | Clear exploitation signals or high-confidence threat activity, plus reachable exposure | VPN, identity, edge gateways, externally exposed apps | As fast as you can safely execute |

| High | Likely remote compromise or privilege escalation on widely used systems | Domain services, management consoles, jump hosts | Days, not weeks |

| Standard | Common vulnerabilities with limited exposure and strong segmentation | General servers, user endpoints | Regular cadence |

| Controlled Deferral | Patch unavailable or operational constraints are real and documented | Legacy OT devices, medical systems, vendor-locked platforms | Timeboxed exception with mitigations |

One practice that consistently reduces cyber risk is treating identity and edge access as a priority class. When authentication systems or perimeter gateways are weak, attackers move faster than defenders can contain.

Patch Cycles Without Downtime

The fastest way to destroy trust in patch management is to cause downtime. The fastest way to increase cyber risk is to avoid patching because downtime is feared. The solution is not heroics. It is engineering.

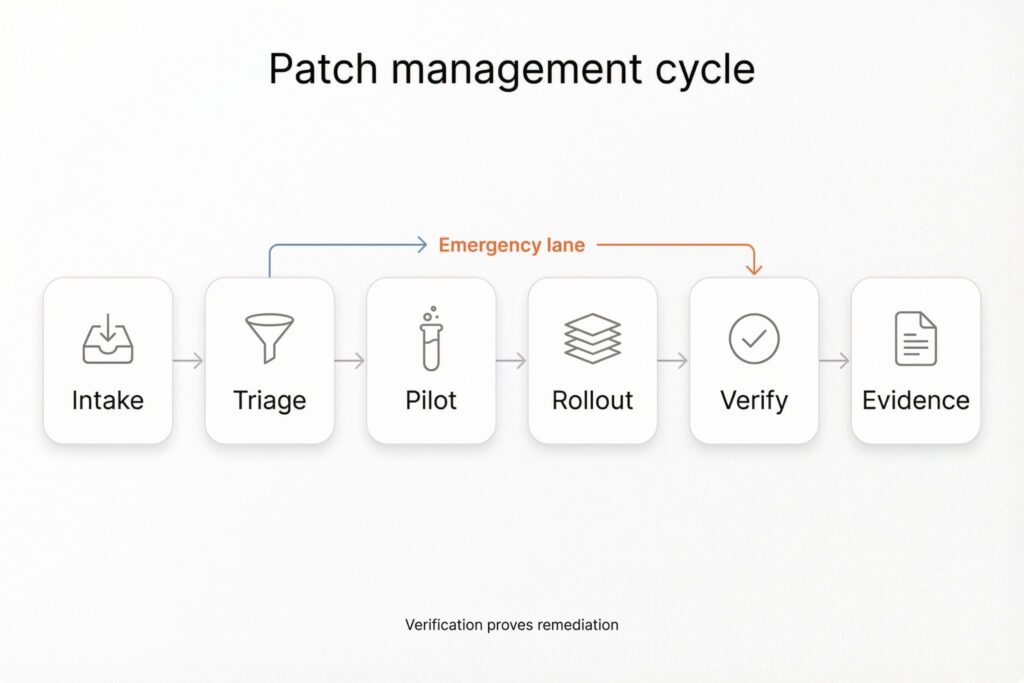

Start with predictable cadence, then add an emergency lane.

Many teams align routine work to vendor cycles. Microsoft, for example, schedules Patch Tuesday on the second Tuesday of each month. That predictability makes planning easier, but it does not remove the need for out-of-band action when exploitation spikes.

A stable workflow has a few non-negotiables.

- Ownership is explicit and tied to services, not to servers

- Pilot rings exist and represent production reality

- Rollback is engineered, not improvised

- Verification is independent of deployment logs

Here is a practical example that shows how this looks in a real week.

A new advisory lands for an edge appliance that supports remote access. The asset is reachable from the internet and it sits close to privileged networks. The team classifies it as Emergency. The service owner approves a short maintenance window the same day. The network team applies the update, then confirms the running build version, validates authentication flows, and checks error rates and session stability. The SOC increases monitoring for unusual logins and new admin sessions for the next day. The result is not just a patched device. The result is a closed exposure with evidence.

That last word matters. Evidence is what makes patch management credible.

Verification That Proves The Risk Went Down

Many organizations confuse deployment with remediation. They push patches, dashboards go green, and the same vulnerabilities still appear in scans. Sometimes the patch did not install. Sometimes the service did not restart. Sometimes an active passive pair stayed half-updated. In cyber terms, intent does not count.

Verification should be lightweight for routine changes and stricter for high-risk fixes. Keep it practical.

- Confirm version and configuration state on the asset itself

- Validate service health through the paths users actually take

- Use an independent signal such as authenticated scanning or endpoint telemetry

- Watch for regressions in logs, error rates, and authentication failures

This is also where teams lower the fear of patching. When verification is consistent, outages become rarer, and emergency patches stop feeling like gambles.

How To Manage Patch Exceptions

Some systems cannot be patched quickly. Sometimes the vendor is slow. Sometimes a patch risks safety or compliance. Sometimes a tightly coupled application needs a coordinated release. Exceptions are not the problem. Unbounded exceptions are the problem.

Treat every exception as a risk decision with an expiry and a mitigation plan. A workable exception record includes:

- What is affected and what change is deferred

- Why the deferral is necessary right now

- The expiry date and the next review date

- Compensating controls such as segmentation, reduced exposure, or temporary feature disablement

- The accountable risk owner

This structure keeps patch management human. It stops security teams from becoming the department of no, and it stops operational teams from hiding risk behind indefinite postponement.

Metrics That Help Leaders Fund The Right Work

Good metrics do not celebrate activity. They show whether the program reduces attacker-relevant exposure while staying operationally safe.

| Metric | What It Tells You | What To Do When It Looks Bad |

|---|---|---|

| Time to remediate for exposed high-risk issues | How long attackers have an opportunity window | Tighten emergency lane and ownership |

| Coverage of critical assets | Whether the program protects what matters most | Fix inventory and add missing surfaces |

| Verification rate | Whether remediation is real or assumed | Add independent checks and sampling |

| Failure and rollback rate | Whether change practices are safe | Improve pilot rings and release discipline |

| Exception age and expiry compliance | Whether risk is being managed or ignored | Enforce timeboxing and mitigations |

If you run patch management this way, it stops being a hygiene task. It becomes a core cybersecurity control that reduces predictable attack paths, lowers incident cost, and makes your cyber response faster when something still goes wrong.

Frequently Asked Questions (FAQ)

Vulnerability management finds and ranks issues. Patch Management is the part that changes systems safely and proves the fix took effect.

Keep the change record, the exact version before and after, and a verification artifact such as an authenticated scan result or device telemetry confirming the vulnerable component is no longer present.

If the vendor fix is not available or the patch window is risky, reducing exposure can be the fastest risk reduction. Disabling a vulnerable feature, tightening access, or adding segmentation can buy time without pretending the vulnerability is gone.

Patch means rebuild, redeploy, and retire old images. If teams patch running containers, they usually create drift that makes the next incident harder to investigate.

Ask whether the control actually removes reachability or meaningfully blocks exploitation, not whether it sounds reasonable. If the vulnerable component is still reachable by the attacker you care about, treat the control as temporary.

Leave a Reply